NA-MIC Project Weeks

NA-MIC Project Weeks

Back to Projects List

Automated Standardized Orientation for Cone-Beam Computed Tomography (CBCT)

Key Investigators

- Luc Anchling (UoM)

- Nathan Hutin (UoM)

- Maxime Gillot (UoM)

- Baptiste Baquero (UoM)

- Jonas Bianchi (UoM, UoP)

- Antonio Ruellas (UoM)

- Felicia Miranda (UoM)

- Selene Barone (UoM)

- Marcela Gurgel (UoM)

- Marilia Yatabe (UoM)

- Najla Al Turkestani (UoM)

- Hina Joshi (UoNC)

- Lucia Cevidanes (UoM)

- Juan Prieto (UoNC)

Project Description

To develop a standardized head orientation approach for medical and dental images is crucial to improve the reliability of automated image analysis towards clinical decision-making. Manual and user-dependent head orientation is time-consuming and prone to errors. For this reason, this study aims to automatically obtain the desired standardized orientation of Cone Beam Computed Tomography scans, regardless of the patient’s positioning during the scan or any CT scanner initialization changes.

The Automated Standardized Orientation (ASO) tool presented in this work automatically identifies landmarks on 3D volumes regardless of orientation, using a deep learning landmark identification algorithm that handles images with random orientation (ALI_CBCT). ASO uses a landmark-based registration approach to automatically orient a 3D volume to a common space. The method aligns the identified landmarks to a set of reference ones. The method starts by aligning 3 randomly chosen landmarks and refines their position using an Iterative Closest Point (ICP) transform. The tool also allows user-selected landmarks for precision purposes. All the transforms computed during this process are concatenated and the final transform is applied to the CBCT volume.

To make ASO more robust, a pre-orientation algorithm has been developed. This part uses a deep learning algorithm to identify the head orientation and then rotates the volume to the desired orientation. This algorithm is currently being tested and will be implemented in the ASO module. The training has been realized with random rotations.

Objective

- Create a Slicer Module to use this algorithm with CBCT files

- Make the algorithm more robust to different head orientations

- Do some maintenance to the previously developed ASO module

Approach and Plan

- Develop in collaboration with Nathan Hutin (ASO_IOS) a Slicer Module to make ASO work for both IOS and CBCT files

- Implement the pre-orientation algorithm to this module

- Use CLI version of previously developped code to make ASO FULLY-Automated (without any input from the user)

Progress and Next Steps

- Slicer Module has been developed:

- In a first step, only a SEMI-Automated version has been implemented (with scan and landmark files as inputs)

- In a second step, a FULLY-Automated version has been developed (with ONLY scan files as inputs and ALI module running in the background)

- Pre-orientation algorithm, DenseNet169 from MONAI library, has been implemented in the ASO module

- Receive input before deploying ASO to SlicerAutomatedDentalTool Extension

ReadMe

Automated Standardized Orientation (ASO)

Automated Standerized Orientation (ASO) is an extension for 3D Slicer to perform automatic orientation either on IOS or CBCT files.

ASO Modules

ASO module provide a convenient user interface allowing to orient different type of scans:

- CBCT scan

- IOS scan

To select the Input Type in the Extension just select between CBCT and IOS here:

How the module works?

2 Modes Available (Semi or Fully Automated)

- Semi-Automated (to only run the landmark-based registration with landmark and scans as input)

- Fully-Automated (to perform Pre Orientation steps, landmark Identification and ASO with only scans as input)

| Mode | Input |

|---|---|

| Semi-Automated | Scans, Landmark files |

| Fully-Automated | Scans, ALI Models, Pre ASO Models (for CBCT files), Segmentation Models (for IOS files) |

To select the Mode in the Extension just select between Semi and Fully Automated here:

The Fully-Automated Mode

Inputsection is slightly different:

Input file:

| Input Type | Input Extension Type |

|---|---|

| CBCT | .nii, .nii.gz, .gipl.gz, .nrrd, .nrrd.gz |

| IOS | .vtk |

To select the Input Folder in the Extension just select your folder with Data here:

The input has to be IOS with teeth’s segmentation. The teeth’s segmentation can be automatically done using the SlicerDentalModelSeg extension. The IOS files need to have in their name the type of jaw (Upper or Lower).

**Test Files Available:**

You can either download them using the link or by using the Download Test Files.

| Module Selected | Download Link to Test Files | Information |

| ———– | ———– | ———– |

| Semi-CBCT | Test Files | Scan and Fiducial List for this Reference|

| Fully-CBCT | Test File | Only Scan|

| Semi-IOS | | Mesh and Fiducial List|

| Fully-IOS | | Only Mesh |

Reference:

The user has to choose a folder containing a Reference Gold File with an oriented scan with landmarks.

You can either use your own files or download ours using the Download Reference button in the module Input section.

| Input Type | Reference Gold Files |

| ———– | ———– |

| CBCT | CBCT Reference Files |

| IOS | IOS Reference Files |

To select the Reference Folder in the Extension just select your folder with Reference Data here:

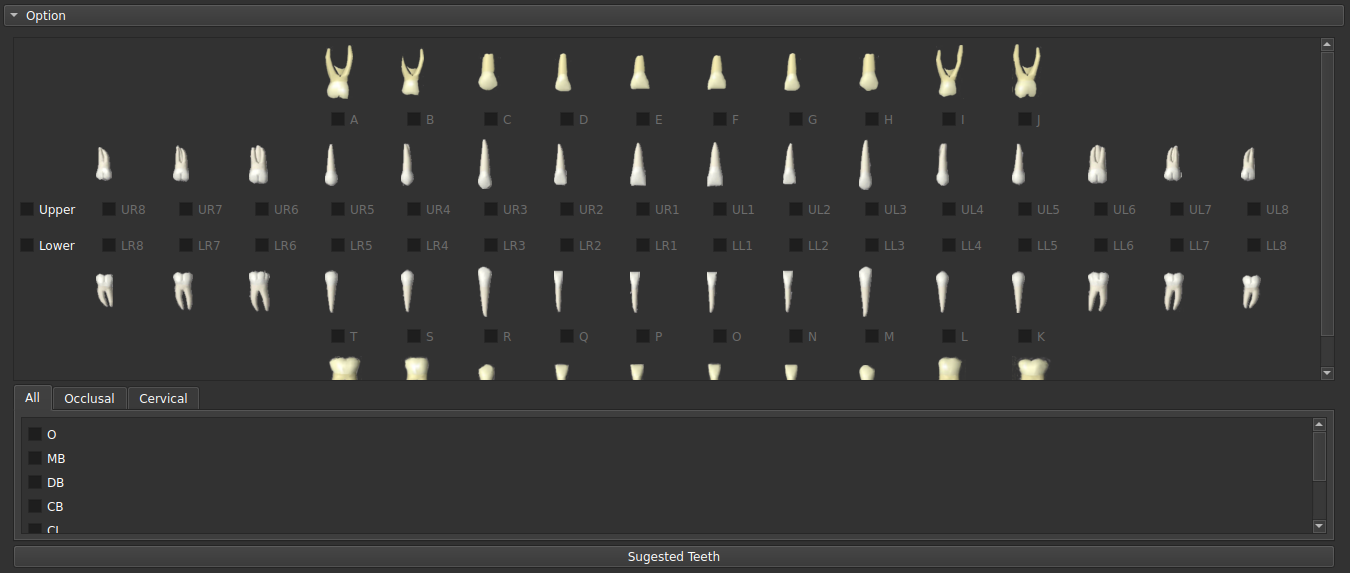

Landmark selection

The user has to decide which landmarks he will use to run ASO.

| Input Type | Landmarks Available |

|---|---|

| CBCT | Cranial Base, Lower Bones, Upper Bones, Lower and Upper Teeth |

| IOS | Upper and Lower Jaw |

For IOS: The user has to indicate array name of labels in the vtk surface. By default the name is PredictedID.

The landmark selection is handled in the

OptionSection:

For IOS:

For CBCT:

Models Selection

For the Fully-Automated Mode, models are required as input, use the Download Models Button or follow the following instructions:

For CBCT (Details):

A Pre-Orientation and ALI_CBCT models are needed

To add the Pre-Orientation models just download PreASOModels.zip, unzip it and select it here:

To add the ALI_CBCT models go to this link, select the desired models, unzip them in a single folder and select it here:

For IOS:

INSERT YOUR BLABLA HERE To add the Pre-Orientation models just download PreASOModels.zip, unzip it and select it here:

Outputs Options

You can decide the Extension that the output files will have and the folder where they will go in here:

Let’s Run it

Now that everything is in order, just press the

RunButton in this section:

Algorithm

The implementation is based on iterative closest point’s algorithm to execute a landmark-based registration. Some preprocessing steps are done to make the orientation works better (and are described respectively in CBCT and IOS part)

ASO CBCT

Fully-Automated mode:

-

a deep learning model is used to predict head orientation and correct it. Models are available for download (Pre ASO CBCT Models)

-

a Landmark Identification Algorithm (ALI CBCT) is used to determine user-selected landmarks

-

an ICP transform is used to match both of the reference and the input file

For the Semi-Automated mode, only step 3 is used to match input landmarks with reference’s ones.

**Description of the tool:**

ASO IOS

Acknowledgements

Nathan Hutin (University of Michigan), Luc Anchling (UoM), Felicia Miranda (UoM), Selene Barone (UoM), Marcela Gurgel (UoM), Najla Al Turkestani (UoM), Juan Carlos Prieto (UNC), Lucia Cevidanes (UoM)

License

It is covered by the Apache License, Version 2.0:

http://www.apache.org/licenses/LICENSE-2.0